Mixed ordered logit

Contents

Mixed ordered logit¶

We begin by performing the necessary imports:

import os

os.environ["CUDA_VISIBLE_DEVICES"]="0"

import sys

sys.path.insert(0, "/home/rodr/code/amortized-mxl-dev/release")

import logging

import time

import matplotlib.pyplot as plt

import numpy as np

import pandas as pd

import torch

# Fix random seed for reproducibility

np.random.seed(42)

Import previously simulated data¶

For this demo, we will use previously generated simulated data. Since this is artificial data, we actually know the parameter values that were used to generate the choices: [1, -1, 1, -1]. The (log) cutoff points used to generate the data were: [-0.7 -0.2 0.5]. Our goal is to see whether our model implementation can accurately recover the true parameters that were used to generate the data.

df = pd.read_csv('../data/fake_data_ordered.csv', index_col=0)

num_resp = len(df)

df['indID'] = np.arange(num_resp)

df.head()

| choice | x0 | x1 | x2 | x3 | indID | |

|---|---|---|---|---|---|---|

| 0 | 0 | 1.0 | 0.585995 | 0.667338 | 0.681352 | 0 |

| 1 | 0 | 1.0 | 0.476451 | 0.026819 | 0.443944 | 1 |

| 2 | 0 | 1.0 | 0.753613 | 0.768395 | 0.468803 | 2 |

| 3 | 2 | 1.0 | 0.605222 | 0.862393 | 0.434364 | 3 |

| 4 | 0 | 1.0 | 0.142990 | 0.001069 | 0.608124 | 4 |

Mixed Logit specification¶

We now make use of the developed formula interface to specify the utilities of the mixed logit model.

We begin by defining the fixed effects parameters, the random effects parameters, and the observed variables. This creates instances of Python objects that can be put together to define the utility functions for the different alternatives.

Once the utilities are defined, we collect them in a Python dictionary mapping alternative names to their corresponding expressions.

Note that, since this is an ordinal regression model, there is only one single utility. The observed values correspond to different “levels” of the response variable.

from core.dcm_interface import FixedEffect, RandomEffect, ObservedVariable

import torch.distributions as dists

# define fixed effects parameters

B_X0 = FixedEffect('BETA_X0')

B_X1 = FixedEffect('BETA_X1')

# define random effects parameters

B_X2 = RandomEffect('BETA_X2')

B_X3 = RandomEffect('BETA_X3')

# define observed variables

for attr in df.columns:

exec("%s = ObservedVariable('%s')" % (attr,attr))

# define utility functions

V1 = B_X0*x0 + B_X1*x1 + B_X2*x2 + B_X3*x3

# associate utility functions with the names of the alternatives

utilities = {"ALT1": V1}

We are now ready to create a Specification object containing the utilities that we have just defined. Note that we must also specify the type of choice model to be used - a mixed logit model (MXL) in this case.

Note that we can inspect the specification by printing the dcm_spec object.

from core.dcm_interface import Specification

#Logit(choice, utilities, availability, df)

#Logit(choice_test, utilities, availability_test, df_test)

# create MXL specification object based on the utilities previously defined

dcm_spec = Specification('MXL', utilities)

print(dcm_spec)

----------------- MXL specification:

Alternatives: ['ALT1']

Utility functions:

V_ALT1 = BETA_X0*x0 + BETA_X1*x1 + BETA_X2_n*x2 + BETA_X3_n*x3

Num. parameters to be estimated: 4

Fixed effects params: ['BETA_X0', 'BETA_X1']

Random effects params: ['BETA_X2', 'BETA_X3']

Once the Specification is defined, we need to define the DCM Dataset object that goes along with it. For this, we instantiate the Dataset class with the Pandas dataframe containing the data in the so-called “wide format”, the name of column in the dataframe containing the observed choices and the dcm_spec that we have previously created.

Note that since this is panel data, we must also specify the name of the column in the dataframe that contains the ID of the respondent (this should be a integer ranging from 0 the num_resp-1).

from core.dcm_interface import Dataset

# create DCM dataset object

dcm_dataset = Dataset(df, 'choice', dcm_spec, resp_id_col='indID')

Preparing dataset...

Model type: MXL

Num. observations: 10000

Num. alternatives: 1

Num. respondents: 10000

Num. menus: 1

Observations IDs: [ 0 1 2 ... 9997 9998 9999]

Alternative IDs: None

Respondent IDs: [ 0 1 2 ... 9997 9998 9999]

Availability columns: None

Attribute names: ['x0', 'x1', 'x2', 'x3']

Fixed effects attribute names: ['x0', 'x1']

Fixed effects parameter names: ['BETA_X0', 'BETA_X1']

Random effects attribute names: ['x2', 'x3']

Random effects parameter names: ['BETA_X2', 'BETA_X3']

Alternative attributes ndarray.shape: (10000, 1, 4)

Choices ndarray.shape: (10000, 1)

Alternatives availability ndarray.shape: (10000, 1, 1)

Data mask ndarray.shape: (10000, 1)

Context data ndarray.shape: (10000, 0)

Neural nets data ndarray.shape: (10000, 0)

Done!

As with the specification, we can inspect the DCM dataset by printing the dcm_dataset object:

print(dcm_dataset)

----------------- DCM dataset:

Model type: MXL

Num. observations: 10000

Num. alternatives: 1

Num. respondents: 10000

Num. menus: 1

Num. fixed effects: 2

Num. random effects: 2

Attribute names: ['x0', 'x1', 'x2', 'x3']

Bayesian Mixed Ordered Logit Model in PyTorch¶

We will modify the generative process of the core MXL model by introducing a vector of \(C-2\) cutoff points \(\boldsymbol\kappa\), where \(C\) denotes the number of levels in the response variable. The cutoffs will then be used by the OrderedLogit function to compute the probabilities of each level.

The resulting generative process is the following (changes to the core MXL model are highlighted in red):

Draw cutoff parameters \(\boldsymbol\kappa \sim \mathcal{N}(\boldsymbol\eta_0, \boldsymbol\Phi_0)\)

Draw fixed taste parameters \(\boldsymbol\alpha \sim \mathcal{N}(\boldsymbol\lambda_0, \boldsymbol\Xi_0)\)

Draw mean vector \(\boldsymbol\zeta \sim \mathcal{N}(\boldsymbol\mu_0, \boldsymbol\Sigma_0)\)

Draw scales vector \(\boldsymbol\theta \sim \mbox{half-Cauchy}(\boldsymbol\sigma_0)\)

Draw correlation matrix \(\boldsymbol\Psi \sim \mbox{LKJ}(\nu)\)

For each decision-maker \(n \in \{1,\dots,N\}\)

Draw random taste parameters \(\boldsymbol\beta_n \sim \mathcal{N}(\boldsymbol\zeta,\boldsymbol\Omega)\)

For each choice occasion \(t \in \{1,\dots,T_n\}\)

Draw observed ordered response \(y_{nt} \sim \mbox{OrderedLogit}(\boldsymbol\alpha, \boldsymbol\beta_n, \boldsymbol\kappa, \textbf{X}_{nt})\)

where \(\boldsymbol\Omega = \mbox{diag}(\boldsymbol\theta) \times \boldsymbol\Psi \times \mbox{diag}(\boldsymbol\theta)\).

For the OrderedLogit probability, we assume:

where \(\kappa_0\) is defined as \(-\infty\) and \(\kappa_C\) as \(+\infty\) (hence, the number of \(\kappa_i\) variables that we need to perform inference on is only \(C-2\)). Note that we use \(\textbf{x}_{ntj,F}\) and \(\textbf{x}_{ntj,R}\) to distinguish between fixed effects and random effects, respectively.

This model is already implemented in the class TorchMXL_Ordered. At the end of this notebook, we provide an explanation of how this extension was implemented.

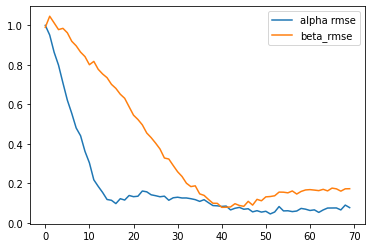

We can instantiate this model from the TorchMXL_Ordered using the following code. We can the run variational inference to approximate the posterior distribution of the latent variables in the model. Note that since in this case we know the true parameters that were used to generate the simualated choice data, we can pass them to the “infer” method in order to obtain additional information during the ELBO maximization (useful for tracking the progress of VI and for other debugging purposes).

%%time

from core.torch_mxl_ordered import TorchMXL_Ordered

# instantiate MXL model

num_categories = 5

mxl = TorchMXL_Ordered(dcm_dataset, num_categories, batch_size=num_resp, use_inference_net=False, use_cuda=True)

# run Bayesian inference (variational inference)

results = mxl.infer(num_epochs=7000, true_alpha=np.array([1, -1]), true_beta=np.array([1, -1]))

[Epoch 0] ELBO: 56100; Loglik: -20462; Acc.: 0.388; Alpha RMSE: 1.000; Beta RMSE: 0.990

[Epoch 100] ELBO: 22516; Loglik: -19553; Acc.: 0.388; Alpha RMSE: 0.951; Beta RMSE: 1.046

[Epoch 200] ELBO: 24147; Loglik: -14972; Acc.: 0.388; Alpha RMSE: 0.864; Beta RMSE: 1.013

[Epoch 300] ELBO: 15727; Loglik: -13902; Acc.: 0.388; Alpha RMSE: 0.798; Beta RMSE: 0.979

[Epoch 400] ELBO: 16683; Loglik: -13879; Acc.: 0.388; Alpha RMSE: 0.709; Beta RMSE: 0.985

[Epoch 500] ELBO: 16292; Loglik: -13459; Acc.: 0.388; Alpha RMSE: 0.621; Beta RMSE: 0.962

[Epoch 600] ELBO: 16674; Loglik: -13230; Acc.: 0.388; Alpha RMSE: 0.554; Beta RMSE: 0.920

[Epoch 700] ELBO: 16519; Loglik: -13196; Acc.: 0.388; Alpha RMSE: 0.480; Beta RMSE: 0.897

[Epoch 800] ELBO: 16110; Loglik: -13011; Acc.: 0.388; Alpha RMSE: 0.441; Beta RMSE: 0.864

[Epoch 900] ELBO: 15663; Loglik: -12956; Acc.: 0.388; Alpha RMSE: 0.362; Beta RMSE: 0.842

[Epoch 1000] ELBO: 15560; Loglik: -12768; Acc.: 0.388; Alpha RMSE: 0.304; Beta RMSE: 0.801

[Epoch 1100] ELBO: 15218; Loglik: -12865; Acc.: 0.388; Alpha RMSE: 0.218; Beta RMSE: 0.818

[Epoch 1200] ELBO: 15551; Loglik: -12812; Acc.: 0.388; Alpha RMSE: 0.185; Beta RMSE: 0.777

[Epoch 1300] ELBO: 15486; Loglik: -12795; Acc.: 0.388; Alpha RMSE: 0.154; Beta RMSE: 0.754

[Epoch 1400] ELBO: 15658; Loglik: -12873; Acc.: 0.388; Alpha RMSE: 0.118; Beta RMSE: 0.735

[Epoch 1500] ELBO: 15126; Loglik: -12768; Acc.: 0.388; Alpha RMSE: 0.114; Beta RMSE: 0.701

[Epoch 1600] ELBO: 15156; Loglik: -12858; Acc.: 0.388; Alpha RMSE: 0.097; Beta RMSE: 0.681

[Epoch 1700] ELBO: 15256; Loglik: -13074; Acc.: 0.388; Alpha RMSE: 0.122; Beta RMSE: 0.651

[Epoch 1800] ELBO: 15664; Loglik: -13086; Acc.: 0.388; Alpha RMSE: 0.115; Beta RMSE: 0.631

[Epoch 1900] ELBO: 15132; Loglik: -12921; Acc.: 0.388; Alpha RMSE: 0.139; Beta RMSE: 0.588

[Epoch 2000] ELBO: 15055; Loglik: -12893; Acc.: 0.388; Alpha RMSE: 0.132; Beta RMSE: 0.545

[Epoch 2100] ELBO: 15116; Loglik: -12993; Acc.: 0.388; Alpha RMSE: 0.135; Beta RMSE: 0.523

[Epoch 2200] ELBO: 15692; Loglik: -13045; Acc.: 0.388; Alpha RMSE: 0.161; Beta RMSE: 0.496

[Epoch 2300] ELBO: 15144; Loglik: -13066; Acc.: 0.388; Alpha RMSE: 0.157; Beta RMSE: 0.455

[Epoch 2400] ELBO: 15099; Loglik: -13100; Acc.: 0.388; Alpha RMSE: 0.142; Beta RMSE: 0.431

[Epoch 2500] ELBO: 15055; Loglik: -13017; Acc.: 0.388; Alpha RMSE: 0.137; Beta RMSE: 0.404

[Epoch 2600] ELBO: 14877; Loglik: -13085; Acc.: 0.388; Alpha RMSE: 0.132; Beta RMSE: 0.374

[Epoch 2700] ELBO: 14887; Loglik: -13246; Acc.: 0.388; Alpha RMSE: 0.135; Beta RMSE: 0.328

[Epoch 2800] ELBO: 14818; Loglik: -13222; Acc.: 0.388; Alpha RMSE: 0.114; Beta RMSE: 0.323

[Epoch 2900] ELBO: 14761; Loglik: -13240; Acc.: 0.388; Alpha RMSE: 0.127; Beta RMSE: 0.290

[Epoch 3000] ELBO: 14801; Loglik: -13267; Acc.: 0.388; Alpha RMSE: 0.130; Beta RMSE: 0.259

[Epoch 3100] ELBO: 14677; Loglik: -13307; Acc.: 0.388; Alpha RMSE: 0.126; Beta RMSE: 0.235

[Epoch 3200] ELBO: 14849; Loglik: -13456; Acc.: 0.388; Alpha RMSE: 0.126; Beta RMSE: 0.201

[Epoch 3300] ELBO: 14983; Loglik: -13386; Acc.: 0.388; Alpha RMSE: 0.122; Beta RMSE: 0.184

[Epoch 3400] ELBO: 14618; Loglik: -13325; Acc.: 0.388; Alpha RMSE: 0.117; Beta RMSE: 0.187

[Epoch 3500] ELBO: 14613; Loglik: -13383; Acc.: 0.388; Alpha RMSE: 0.109; Beta RMSE: 0.147

[Epoch 3600] ELBO: 14755; Loglik: -13511; Acc.: 0.388; Alpha RMSE: 0.117; Beta RMSE: 0.139

[Epoch 3700] ELBO: 14570; Loglik: -13450; Acc.: 0.388; Alpha RMSE: 0.102; Beta RMSE: 0.118

[Epoch 3800] ELBO: 14679; Loglik: -13503; Acc.: 0.388; Alpha RMSE: 0.087; Beta RMSE: 0.099

[Epoch 3900] ELBO: 14716; Loglik: -13575; Acc.: 0.388; Alpha RMSE: 0.086; Beta RMSE: 0.099

[Epoch 4000] ELBO: 14712; Loglik: -13595; Acc.: 0.388; Alpha RMSE: 0.083; Beta RMSE: 0.079

[Epoch 4100] ELBO: 14551; Loglik: -13563; Acc.: 0.388; Alpha RMSE: 0.085; Beta RMSE: 0.079

[Epoch 4200] ELBO: 14475; Loglik: -13448; Acc.: 0.388; Alpha RMSE: 0.065; Beta RMSE: 0.081

[Epoch 4300] ELBO: 14515; Loglik: -13579; Acc.: 0.388; Alpha RMSE: 0.074; Beta RMSE: 0.097

[Epoch 4400] ELBO: 14535; Loglik: -13639; Acc.: 0.388; Alpha RMSE: 0.078; Beta RMSE: 0.088

[Epoch 4500] ELBO: 14596; Loglik: -13657; Acc.: 0.388; Alpha RMSE: 0.069; Beta RMSE: 0.084

[Epoch 4600] ELBO: 14530; Loglik: -13648; Acc.: 0.388; Alpha RMSE: 0.071; Beta RMSE: 0.109

[Epoch 4700] ELBO: 14518; Loglik: -13652; Acc.: 0.388; Alpha RMSE: 0.056; Beta RMSE: 0.090

[Epoch 4800] ELBO: 14489; Loglik: -13636; Acc.: 0.388; Alpha RMSE: 0.062; Beta RMSE: 0.119

[Epoch 4900] ELBO: 14567; Loglik: -13659; Acc.: 0.388; Alpha RMSE: 0.054; Beta RMSE: 0.112

[Epoch 5000] ELBO: 14428; Loglik: -13642; Acc.: 0.388; Alpha RMSE: 0.059; Beta RMSE: 0.132

[Epoch 5100] ELBO: 14510; Loglik: -13739; Acc.: 0.388; Alpha RMSE: 0.045; Beta RMSE: 0.133

[Epoch 5200] ELBO: 14503; Loglik: -13712; Acc.: 0.388; Alpha RMSE: 0.055; Beta RMSE: 0.137

[Epoch 5300] ELBO: 14558; Loglik: -13804; Acc.: 0.388; Alpha RMSE: 0.083; Beta RMSE: 0.156

[Epoch 5400] ELBO: 14517; Loglik: -13791; Acc.: 0.388; Alpha RMSE: 0.060; Beta RMSE: 0.155

[Epoch 5500] ELBO: 14517; Loglik: -13768; Acc.: 0.388; Alpha RMSE: 0.061; Beta RMSE: 0.152

[Epoch 5600] ELBO: 14516; Loglik: -13808; Acc.: 0.388; Alpha RMSE: 0.057; Beta RMSE: 0.161

[Epoch 5700] ELBO: 14434; Loglik: -13729; Acc.: 0.388; Alpha RMSE: 0.060; Beta RMSE: 0.146

[Epoch 5800] ELBO: 14447; Loglik: -13768; Acc.: 0.388; Alpha RMSE: 0.073; Beta RMSE: 0.159

[Epoch 5900] ELBO: 14459; Loglik: -13792; Acc.: 0.388; Alpha RMSE: 0.069; Beta RMSE: 0.167

[Epoch 6000] ELBO: 14472; Loglik: -13818; Acc.: 0.388; Alpha RMSE: 0.063; Beta RMSE: 0.168

[Epoch 6100] ELBO: 14475; Loglik: -13813; Acc.: 0.388; Alpha RMSE: 0.066; Beta RMSE: 0.166

[Epoch 6200] ELBO: 14473; Loglik: -13835; Acc.: 0.388; Alpha RMSE: 0.053; Beta RMSE: 0.163

[Epoch 6300] ELBO: 14443; Loglik: -13823; Acc.: 0.388; Alpha RMSE: 0.065; Beta RMSE: 0.170

[Epoch 6400] ELBO: 14468; Loglik: -13811; Acc.: 0.388; Alpha RMSE: 0.075; Beta RMSE: 0.162

[Epoch 6500] ELBO: 14528; Loglik: -13912; Acc.: 0.388; Alpha RMSE: 0.075; Beta RMSE: 0.176

[Epoch 6600] ELBO: 14458; Loglik: -13852; Acc.: 0.388; Alpha RMSE: 0.075; Beta RMSE: 0.172

[Epoch 6700] ELBO: 14395; Loglik: -13817; Acc.: 0.388; Alpha RMSE: 0.065; Beta RMSE: 0.160

[Epoch 6800] ELBO: 14486; Loglik: -13864; Acc.: 0.388; Alpha RMSE: 0.090; Beta RMSE: 0.172

[Epoch 6900] ELBO: 14474; Loglik: -13883; Acc.: 0.388; Alpha RMSE: 0.077; Beta RMSE: 0.173

Elapsed time: 175.0668683052063

True alpha: [ 1 -1]

Est. alpha: [ 0.9782518 -0.88276696]

BETA_X0: 0.978

BETA_X1: -0.883

True zeta: [ 1 -1]

Est. zeta: [ 1.1570988 -1.1744796]

BETA_X2: 1.157

BETA_X3: -1.174

CPU times: user 21min 17s, sys: 1.53 s, total: 21min 18s

Wall time: 2min 58s

# log(true_cutoffs)=[-0.7 -0.2 0.5]

Lets now compare the inferred cutoffs with the true cutoffs: log(true_cutoffs) = [-0.7 -0.2 0.5]

The ones that we infered are:

torch.log(mxl.softplus(mxl.kappa_mu))

tensor([-0.6353, -0.1080, 0.5551], device='cuda:0', grad_fn=<LogBackward0>)

Quite similar values!

The “results” dictionary containts a summary of the results of variational inference, including means of the posterior approximations for the different parameters in the model:

results

{'Estimation time': 64.12379693984985,

'Est. alpha': array([ 1.0848361, -0.9402135], dtype=float32),

'Est. zeta': array([ 1.0654774, -1.1859826], dtype=float32),

'Est. beta_n': array([[ 0.7436627 , -1.5113661 ],

[ 1.1884117 , -1.5241228 ],

[ 0.5740844 , -1.2508584 ],

...,

[ 0.94637936, -1.5531573 ],

[ 1.308997 , -1.1411221 ],

[ 1.1743374 , -1.6200343 ]], dtype=float32),

'ELBO': 14481.8515625,

'Loglikelihood': -13628.396484375,

'Accuracy': 0.38760000467300415}

This interface is currently being improved to include additional output information, but additional information can be obtained from the attributes of the “mxl” object for now.

Implementation details¶

TODO